The rationale for a value-weighted index can be paraphrased as follows:

- Most investors, pro’s included, can’t beat the index. Therefore, buying an index fund is better than messing it up yourself or getting an active manager to mess it up for you.

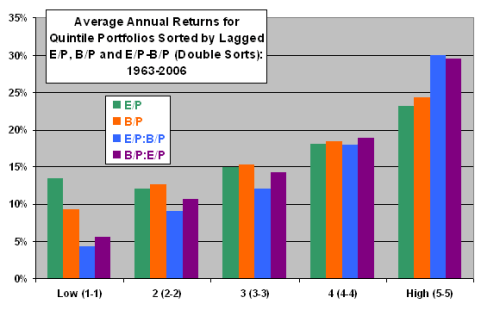

- If you’re going to buy an index, you might as well buy the best one. An index based on the market capitalization-weighted S&P500 will be handily beaten by an equal-weighted index, which will be handily beaten by a fundamentally weighted index, which is in turn handily beaten by a “value-weighted index,” which is what Greenblatt calls his “Magic Formula-weighted index.”

According to Greenblatt, the second point looks like this:

Market Capitalization-Weight < Equal Weight < Fundamental Weight < “Value Weight” (Greenblatt’s Magic Formula Weight)

In chart form (from Joel Greenblatt’s Value Weighted Index):

There is an argument to be made that the second point could be as follows:

Market Capitalization-Weight < Equal Weight < “Value Weight” (Greenblatt’s Magic Formula Weight) <= Fundamental Weight

Fundamental Weight could potentially deliver better returns than “Value” Weight, if we select the correct fundamentals.

The classic paper on fundamental indexation is the 2004 paper “Fundamental Indexation” by Robert Arnott (Chairman of Research Affiliates), Jason Hsu and Philip Moore. The paper is very readable. Arnott et al argue that it should be possible to construct stock market indexes that are more efficient than those based on market capitalization. From the abstract:

In this paper, we examine a series of equity market indexes weighted by fundamental metrics of size, rather than market capitalization. We find that these indexes deliver consistent and significant benefits relative to standard capitalization-weighted market indexes. These indexes exhibit similar beta, liquidity and capacity compared to capitalization-weighted equity market indexes and have very low turnover. They show annual returns that are on average 213 basis points higher than equivalent capitalization-weighted indexes over the 42 years of the study. They contain most of the same stocks found in the traditional equity market indexes, but the weights of the stocks in these new indexes differ materially from their weights in capitalization-weighted indexes. Selection of companies and their weights in the indexes are based on simple measures of firm size such as book value, income, gross dividends, revenues, sales, and total company employment.

Arnott et al seek to create alternative indices that as efficient “as the usual capitalization-weighted market indexes, while retaining the many benefits of capitalization- weighting for the passive investor,” which include, for example, lower trading costs and fees than active management.

Interestingly, they find a high degree of correlation between market capitalization-weighted indices and fundamental indexation:

We find most alternative measures of firm size such as book value, income, sales, revenues, gross dividends or total employment are highly correlated with capitalization and liquidity, which means these Fundamental Indexes are also primarily concentrated in the large capitalization stocks, preserving the liquidity and capacity benefits of traditional capitalization- weighted indexes. In addition, as compared with conventional capitalization-weighted indexes, these Fundamental Indexes typically have substantially identical volatilities, and CAPM betas and correlations exceeding 0.95. The market characteristics that investors have traditionally gained exposure to, through holding capitalization-weighted market indexes, are equally accessible through these Fundamental Indexes.

The main problem with the equal-weight indexes we looked at last week is the high turnover to maintain the equal weighting. Fundamental indexation could potentially suffer from the same problem:

Maintaining low turnover is the most challenging aspect in the construction of Fundamental Indexes. In addition to the usual reconstitution, a certain amount of rebalancing is also needed for the Fundamental Indexes. If a stock price goes up 10%, its capitalization also goes up 10%. The weight of that stock in the Fundamental Index will at some interval need to be rebalanced to its its Fundamental weight in that index. If the rebalancing periods are too long, the difference between the policy weights and actual portfolio weights become so large that some of the suspected negative attributes associated with capitalization weighting may be reintroduced.

Arnott et al construct their indices as follows:

[We] rank all companies by each metric, then select the 1000 largest. Each of these 1000 largest is included in the index, at its relative metric weight, to create the Fundamental Index for that metric. The measures of firm size we use in this study are:

• book value (designated by the shorthand “book” later in this paper),

• trailing five-year average operating income (“income”),

• trailing five-year average revenues (“revenue”),

• trailing five-year average sales (“sales”),

• trailing five-year average gross dividend (“dividend”),

• total employment (“employment”),

We also examine a composite, equally weighting four of the above fundamental metrics of size (“composite”). This composite excludes the total employment because that is not always available, and sales because sales and revenues are so very similar. The four metrics used in the composite are widely available in most countries, so that the Composite Fundamental Index could easily be applied internationally, globally and even in the emerging markets.

…

The index is rebalanced on the last trading day of each year, using the end of day prices. We hold this portfolio until the end of the next year, at which point we use the most recent company financial information to calculate the following year’s index weights.

We rebalance the index only once a year, on the last trading day of the year, for two reasons. First, the financial data available through Compustat are available only on an annual basis in the earliest years of our study. Second, when we try monthly, quarterly, and semi-annual rebalancing, we increase index turnover but find no appreciable return advantage over annual rebalancing.

Performance of the fundamental indices

The returns produced by the fundamental indices are, on average, 1.91 percent higher than the S&P500. The best of the fundamental indexes outpaces the Reference Capitalization index by 2.50% per annum:

Surprisingly, the composite rivals the performance of the average, even though it excludes two of the three best Fundamental Indexes! Most of these indexes outpace the equal-weighted index of the top 1000 by capitalization, with lower risk, lower beta.

Note that the “Reference Capitalization index” is a 1000-stock capitalization-weighted equity market index that bears close resemblance to the highly regarded Russell 1000, although it is not identical. The construction of the Reference Capitalization index allows Arnott et al to “make direct comparisons with the Fundamental Indexes uncomplicated by questions of float, market impact, subjective selections, and so forth.”

Value-Added

In the “value-added” chart Arnott et al examine the correlation of the value added for the various indexes, net of the return for the Reference Capitalization index, with an array of asset classes.

Here, we find differences that are more interesting, though often lacking in statistical significance. The S&P 500 would seem to outpace the Reference Capitalization index when the stock market is rising, the broad US bond market is rising (i.e., interest rates are falling), and high-yield bonds, emerging markets bonds and REITS are performing badly. The Fundamental Indexes have mostly the opposite characteristics, performing best when US and non-US stocks are falling and REITS are rising. Curiously, they mostly perform well when High Yield bonds are rising but Emerging Markets bonds are falling. Also, they tend to perform well when TIPS are rising (i.e., real interest rates are falling). Most of these results are unsurprising; but, apart from the S&P and REIT correlations, most are also not statistically significant.

Commentary

Arnott et al make some excellent points in the paper:

We believe the performance of these Fundamental Indexes are largely free of data mining. Our selection of size metrics were intuitive and were not selected ex post, based upon results. We use no subjective stock selection or weighting decisions in their construction, and the portfolios are not fine-tuned in any way. Even so, we acknowledge that our research may be subject to the following – largely unavoidable – criticisms:

• we lived through the period covered by this research (1/1962-12/2003); we experienced bubble periods where cap-weighting caused severe destruction of investor wealth, contributing to our concern about the efficacy of capitalization-weighted indexation (the “nifty fifty” of 1971-72, the bubble of 1999-2000) and

• our Fundamental metrics of size, such as book value, revenues, smoothed earnings, total employment, and so forth, all implicitly introduce a value bias, amply documented as possible market inefficiencies or as priced risk factors. (Reciprocally, it can be argued that capitalization-weighted indexes have a growth bias, whereas the Fundamental Indexes do not.)

They also make some interesting commentary about global diversification using fundamental indexation:

For international and global portfolios, it’s noteworthy that Fundamental Indexing introduces a more stable country allocation than capitalization weighting. Just as the Fundamental Indexes smooth the movement of sector and industry allocations to mirror the evolution of each sector or industry’s scale in the overall economy, a global Fundamental Indexes index will smooth the movement of country allocations, mirroring the relative size of each country’s scale in the global economy. In other words, a global Fundamental Indexes index should offer the same advantages as GDP-weighted global indexing, with the same rebalancing “alpha” enjoyed by GDP-weighting. We would argue that the “alpha” from GDP-weighting in international portfolios is perhaps attributable to the elimination of the same capitalization-weighted return drag (from overweighting the overvalued countries and underweighting the undervalued countries) as we observe in the US indexes. This is the subject of some current research that we hope to publish in the coming year.

And finally:

This method outpaces most active managers, by a much greater margin and with more consistency, than conventional capitalization-weighted indexes. This need not argue against active management; it only suggests that active managers have perhaps been using the wrong “market portfolio” as a starting point, making active management “bets” relative to the wrong index. If an active management process can add value, then it should perform far better if it makes active bets against one of these Fundamental Indexes than against capitalization-weighted indexes.

Read Full Post »