The second method for boosting the performance of book value as a predictor of future investment returns is Joseph D. Piotroski’s elegant F_SCORE. Piotroski first discussed his F_SCORE in 2002 in Value Investing: The Use of Historical Financial Statement Information to Separate Winners from Losers. In the paper, Piotroski examines whether the application of a simple accounting-based fundamental analysis strategy to a broad portfolio of high book-to-market firms can improve the returns earned by an investor. Piotroski found that his method increased the mean return earned by a low price-to-book investor “by at least 7 1/2% annually” through the “selection of financially strong high BM firms.”

In addition, an investment strategy that buys expected winners and shorts expected losers generates a 23% annual return between 1976 and 1996, and the strategy appears to be robust across time and to controls for alternative investment strategies.

With a return of that magnitude, it’s well worth a deeper look.

Piotroski’s rationale

Piotroski uses “context-specific financial performance measures to differentiate strong and weak firms:”

Instead of examining the relationships between future returns and particular financial signals, I aggregate the information contained in an array of performance measures and form portfolios on the basis of a firm’s overall signal. By focusing on value firms, the benefits to financial statement analysis (1) are investigated in an environment where historical financial reports represent both the best and most relevant source of information about the firm’s financial condition and (2) are maximized through the selection of relevant financial measures given the underlying economic characteristics of these high BM firms.

F_SCORE

On the assumption that the “average high BM firm is financially distressed,” Piotroski chose nine fundamental signals to measure three areas of the firm’s financial condition: profitability, financial leverage/liquidity, and operating efficiency:

In this paper, I classify each firm’s signal realization as either “good” or “bad,” depending on the signal’s implication for future prices and profitability. An indicator variable for the signal is equal to one (zero) if the signal’s realization is good (bad). I define the aggregate signal measure, F_SCORE, as the sum of the nine binary signals. The aggregate signal is designed to measure the overall quality, or strength, of the firm’s financial position, and the decision to purchase is ultimately based on the strength of the aggregate signal.

F_SCORE component: Profitability

On the basis that “current profitability and cash flow realizations provide information about the firm’s ability to generate funds internally,” Piotroski uses four variables to measure these performance-related factors: ROA, CFO, [Delta]ROA, and ACCRUAL:

I define ROA and CFO as net income before extraordinary items and cash flow from operations, respectively, scaled by beginning of the year total assets. If the firm’s ROA (CFO) is positive, I define the indicator variable F_ROA (F_CFO) equal to one, zero otherwise. I define ROA as the current year’s ROA less the prior year’s ROA. If [Delta]ROA [is greater than] 0, the indicator variable F_[Delta]ROA equals one, zero otherwise.

…

I define the variable ACCRUAL as current year’s net income before extraordinary items less cash flow from operations, scaled by beginning of the year total assets. The indicator variable F_ ACCRUAL equals one if CFO [is greater than] ROA, zero otherwise.

F_SCORE component: Leverage, liquidity, and source of funds

For the reason that “most high BM firms are financially constrained” Piotroski assumes that an increase in leverage, a deterioration of liquidity, or the use of external financing is a bad signal about financial risk. Three of the nine financial signals are therefore designed to measure changes in capital structure and the firm’s ability to meet future debt service obligations: [Delta]LEVER, [Delta]LIQUID, and EQ_OFFER:

[Delta]LEVER seeks to capture changes in the firm’s long-term debt levels:

I measure [Delta]LEVER as the historical change in the ratio of total long-term debt to average total assets, and view an increase (decrease) in financial leverage as a negative (positive) signal. By raising external capital, a financially distressed firm is signaling its inability to generate sufficient internal funds (e.g., Myers and Majluf 1984, Miller and Rock 1985). In addition, an increase in long-term debt is likely to place additional constraints on the firm’s financial flexibility. I define the indicator variable F_LEVER to equal one (zero) if the firm’s leverage ratio fell (rose) in the year preceding portfolio formation.

[Delta]LIQUID seeks to measure the historical change in the firm’s current ratio between the current and prior year, where Piotroski defines the current ratio as the ratio of current assets to current liabilities at fiscal year-end:

I assume that an improvement in liquidity (i.e., [Delta]LIQUID [is greater than] 0) is a good signal about the firm’s ability to service current debt obligations. The indicator variable F_[Delta]LIQUID equals one if the firm’s liquidity improved, zero otherwise.

Piotroski argues that financially distressed firms raising external capital “could be signaling their inability to generate sufficient internal funds to service future obligations” and the fact that these firms are willing to issue equity when their stock prices are depressed “highlights the poor financial condition facing these firms.” EQ_OFFER captures whether a firm has issued equity in the year preceding portfolio formation. It is set to one if the firm did not issue common equity in the year preceding portfolio formation, zero otherwise.

F_SCORE component: Operating efficiency

Piotroski’s two remaining signals seek to measure “changes in the efficiency of the firm’s operations:” [Delta]MARGIN and [Delta]TURN. Piotroski believes these ratios are important because they “reflect two key constructs underlying a decomposition of return on assets.”

Piotroski defines [Delta]MARGIN as the firm’s current gross margin ratio (gross margin scaled by total sales) less the prior year’s gross margin ratio:

An improvement in margins signifies a potential improvement in factor costs, a reduction in inventory costs, or a rise in the price of the firm’s product. The indicator variable F_[Delta]MARGIN equals one if [Delta]MARGIN is positive, zero otherwise.

Piotroski defines [Delta]TURN as the firm’s current year asset turnover ratio (total sales scaled by beginning of the year total assets) less the prior year’s asset turnover ratio:

An improvement in asset turnover signifies greater productivity from the asset base. Such an improvement can arise from more efficient operations (fewer assets generating the same levels of sales) or an increase in sales (which could also signify improved market conditions for the firm’s products). The indicator variable F_[Delta]TURN equals one if [Delta]TURN is positive, zero otherwise.

F_SCORE formula and interpretation

Piotroski defines F_SCORE as the sum of the individual binary signals, or

F_SCORE = F_ROA + F_[Delta]ROA + F_CFO + F_ ACCRUAL + F_[Delta]MARGIN + F_[Delta]TURN + F_[Delta]LEVER + F_[Delta]LIQUID + EQ_OFFER.

An F_SCORE ranges from a low of 0 to a high of 9, where a low (high) F_SCORE represents a firm with very few (mostly) good signals. To the extent current fundamentals predict future fundamentals, I expect F_SCORE to be positively associated with changes in future firm performance and stock returns. Piotroski’s investment strategy is to select firms with high F_SCORE signals.

Piotroski’s methodology

Piotroski identified firms with sufficient stock price and book value data on COMPUSTAT each year between 1976 and 1996. For each firm, he calculated the market value of equity and BM ratio at fiscal year-end. Each fiscal year (i.e., financial report year), he ranked all firms with sufficient data to identify book-to-market quintile and size tercile cutoffs and classified them into BM quintiles. Piotroski’s final sample size was 14,043 high BM firms across the 21 years.

He measured firm-specific returns as one-year (two-year) buy-and-hold returns earned from the beginning of the fifth month after the firm’s fiscal year-end through the earliest subsequent date: one year (two years) after return compounding began or the last day of CRSP traded returns. If a firm delisted, he assumed the delisting return is zero. He defined market-adjusted returns as the buy-and-hold return less the value-weighted market return over the corresponding time period.

Descriptive evidence of high book-to-market firms

Piotroski provides descriptive statistics about the financial characteristics of the high book-to-market portfolio of firms, as well as evidence on the long-run returns from such a portfolio. The average (median) firm in the highest book-to-market quintile of all firms has a mean (median) BM ratio of 2.444 (1.721) and an end-of-year market capitalization of 188.50(14.37)M dollars. Consistent with the evidence presented in Fama and French (1995), the portfolio of high BM firms consists of poor performing firms; the average (median) ROA realization is –0.0054 (0.0128), and the average and median firm saw declines in both ROA (–0.0096 and –0.0047, respectively) and gross margin (–0.0324 and –0.0034, respectively) over the last year. Finally, the average high BM firm saw an increase in leverage and a decrease in liquidity over the prior year.

High BM returns

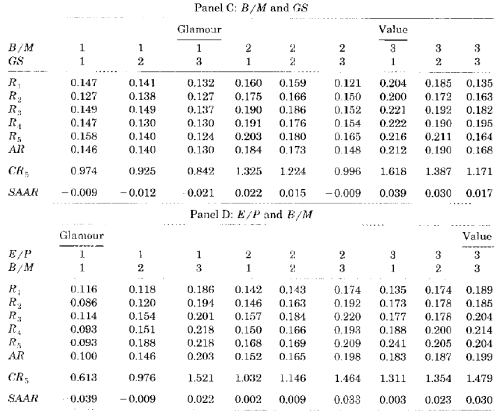

The table below (Panel B of Table 1) extracted from the paper presents the one-year and two-year buy-and-hold returns for the complete portfolio of high BM firms, along with the percentage of firms in the portfolio with positive raw and market-adjusted returns over the respective investment period. Consistent with the findings in the Fama and French (1992) and Lakonishok, Shleifer, and Vishny (1994) studies, high BM firms earn positive market-adjusted returns in the one-year and two-year periods following portfolio formation:

Perhaps Piotroski’s most interesting finding is that, despite the strong mean performance of this portfolio, a majority of the firms (approximately 57%) earn negative market-adjusted returns over the one- and two-year windows. Piotroski concludes, therefore, that any strategy that can eliminate the left tail of the return distribution (i.e., the negative return observations) will greatly improve the portfolio’s mean return performance.

High BM and F_SCORE returns

Panel A of Table 3 below shows the one-year market-adjusted returns to the Piotroski F_SCORE strategy:

The table demonstrates the return difference between the portfolio of high F_SCORE firms and the complete portfolio of high BM firms. High F_SCORE firms earn a mean market-adjusted return of 0.134 versus 0.059 for the entire BM quintile. The return improvements also extend beyond the mean performance of the various portfolios. The results in the table shows that the 10th percentile, 25th percentile, median, 75th percentile, and 90th percentile returns of the high F_SCORE portfolio are significantly higher than the corresponding returns of both the low F_SCORE portfolio and the complete high BM quintile portfolio using bootstrap techniques. Similarly, the proportion of winners in the high F_SCORE portfolio, 50.0%, is significantly higher than the two benchmark portfolios (43.7% and 31.8%). Overall, it is clear that F_SCORE discriminates between eventual winners and losers.

Conclusion

Piotroski’s F_SCORE is clearly a very useful metric for high BM investors. Piotroski’s key insight is that, despite the strong mean performance of a high BM portfolio, a majority of the firms (approximately 57%) earn negative market-adjusted returns over the one- and two-year windows. The F_SCORE is designed to eliminate the left tail of the return distribution (i.e., the negative return observations). It succeeds in doing so, and the resulting returns to high BM and high F_SCORE portfolios are nothing short of stunning.